How Much Energy Does AI Use?

I often get questions about the energy consumption of AI. How much energy does it take to train frontier models like GPT 4o, and should I feel guilty about generating a fun meme to share in the office group chat on a Friday afternoon?

Let’s take a deep dive!

The energy consumption of AI can be split into two categories:

-

the energy required to ask questions of models (to “prompt”)

-

the energy required to train models

Energy per prompt

The energy required to ask a question of ChatGPT’s simpler models, such as GPT 4o, is roughly 0.4 Wh. That’s about the same as streaming a few seconds of video on YouTube or Netflix. More advanced models use more energy. A longer “deep research” query can require over 33 Wh to deliver an answer — more than 80 times as much energy1. Generating video draws even more energy — nearly 1 kWh for a five-second clip! That’s the same amount of energy needed to drive an electric car a full five kilometers.2

How much you prompt varies enormously from person to person, of course. I’ve written 364 prompts in the past seven days, which translates to an energy consumption of roughly one kWh.3

Energy for training models

A model is a digital artifact containing the condensed information from the data used to train it — which can be large portions of the text on the internet. The largest models today contain up to a trillion parameters and take up roughly one TB of storage.

Condensing a large amount of training data into a smaller model is called “training” the model. Distilling the “useful intelligence” from the training data requires a great deal of computing power, and therefore a great deal of energy.

The total energy consumption for training GPT3 is estimated at roughly 1.3 GWh. That’s about as much energy as 76 average-sized Swedish households use in a year.

GPT3 is by now an ancient model (it was released in June 2020), and models have since grown larger and more energy-intensive. Exactly how much energy the best available models today — such as GPT 4o, Claude 4, Gemini 2.5, or Grok 3 — needed for training is hard to say, since the companies don’t publish energy consumption data. Estimates land around 50–100 GWh per model, which is up to 77 times the energy required for GPT34, or more than the annual consumption of over 5,800 Swedish households.

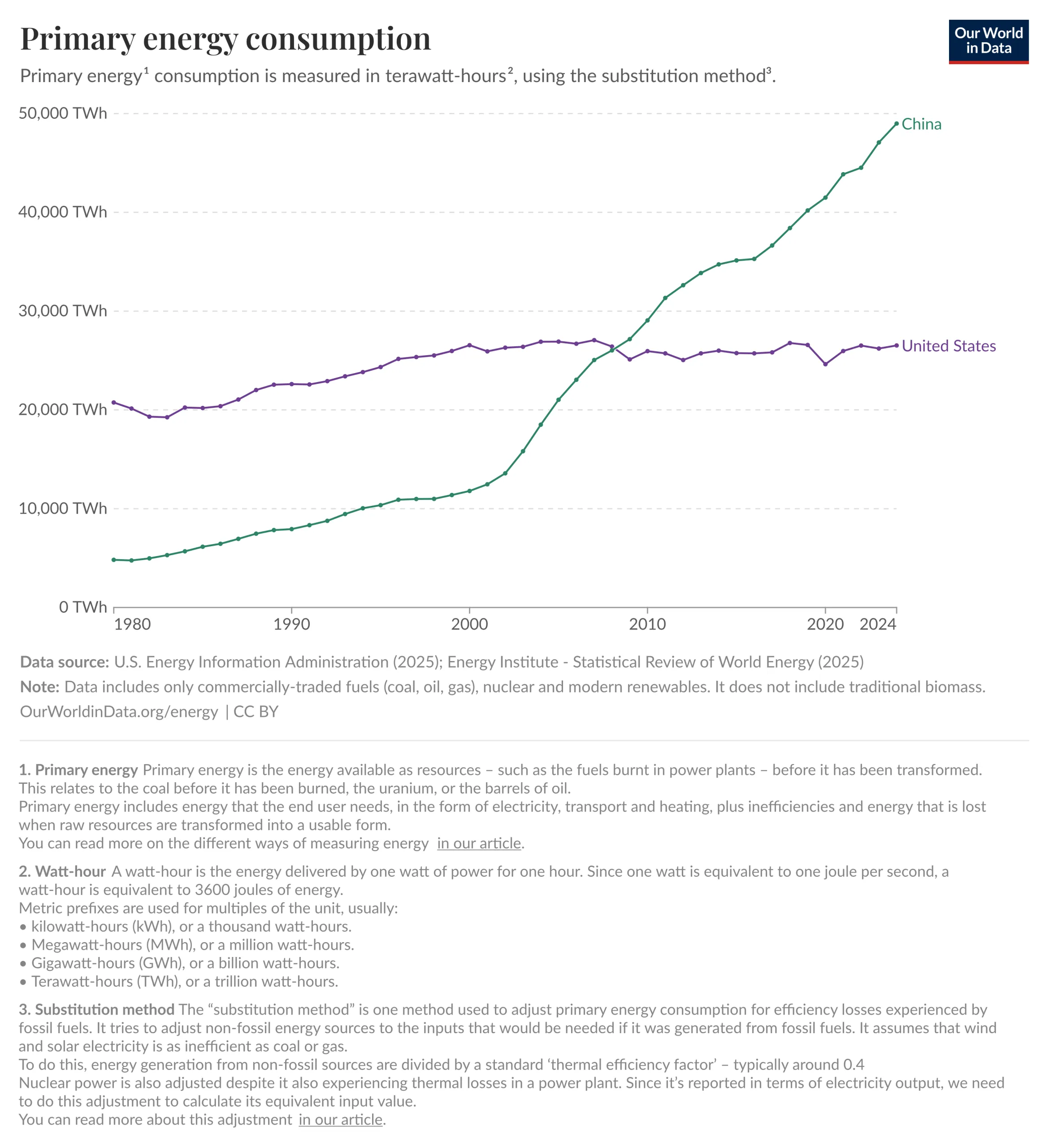

AI companies need ever more computing power to train their models, which is driving up the projected energy demand for data centers. The increase is estimated at close to 550 TWh from 2024 to 2030 — roughly as much energy as Sweden consumes in a year. Electricity production for data centers is considered a strategically important issue for both the US and China. Some Americans have expressed concerns about the gap in expanded energy production between the US and China. AI technology is viewed as a strategic resource, and if there isn’t enough available energy to train new AI models, the US risks falling behind.

How much energy does AI use?

So, to answer the opening questions.

Training large models requires enormous amounts of energy, a need that is moreover forecast to grow in the coming years.

Running individual prompts doesn’t use all that much energy. You probably need to feel more guilty about the ground beef for Friday tacos than about the meme in the office chat.